Fundamentals of Markov Chain

By Juan M. Gutierrez

A Markov chain is a mathematical system that undergoes a transition from one state to another according to certain probability rules. The defining characteristic of a Markov chain is that no matter how the process reaches the current state, the possible future states are fixed. In other words, the probability of transitioning to any particular state depends only on the current state and the elapsed time. The state space or the collection of all possible states can be anything: letters, numbers, weather conditions, baseball scores, or stock performance.

Markov chains can be modeled by finite state machines, and random walks provide a prolific example of their usefulness in mathematics. They appear widely in the context of statistics and information theory, and are widely used in economics, game theory, queuing (communication) theory, genetics, and finance. Although it is possible to discuss Markov chains with state spaces of any size, the initial theory and most applications focused on the situation with a finite (or countable infinite) number of states.

BASIC CONCEPTS

The Markov chain is a random process, but the difference between it and the general random process is that the Markov chain must be "memoryless". In other words, the (probability) of future actions does not depend on the steps leading to the current state. This is called the Markov property. Although Markov chain theory is important because there are so many daily processes that satisfy Markov properties, there are many common examples of random properties that do not satisfy Markov properties.

A common probability question is what is the probability of getting a ball of a certain colour if it is chosen at random from a bag of several coloured balls. You can also ask what is the probability of getting the next ball, and so on. Thus, there starts to exist a stochastic process with colour for the random variable that does not satisfy the Markov property. Depending on the balls that are removed, the probability of getting a ball of a given colour later on can be drastically different.

A variant of the same question again asks for the colour of the ball, but allows it to be replaced each time a ball is drawn. Again, a stochastic process is created with the colour as a random variable. However, this process satisfies the Markov property. Can you work out why?

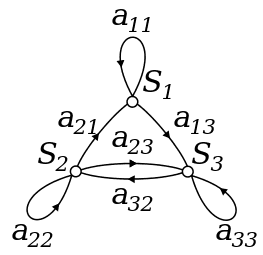

In probability theory, the simplest example is a time-homogeneous Markov chain in which the probability of any state transition is independent of time. Such a process can be represented by a directed graph with labels, in which the sum of the labels on the output lines of any vertex is 1. A time-homogeneous Markov chain composed of states A and B is shown in the diagram below. What is the probability that a process starting from A reaches B after two stages?

The probability of that is 0.3 * 0.7 + 0.7 * 0.2 = 0.35

Transition Matrices

A transition matrix for Markov chain {X} at time t is a matrix containing information on the probability of transitioning between states. In particular, given an ordering of a matrix's rows and columns by the state space

the

This means each row of the matrix is a probability vector, and the sum of its entries is 1.

For our example...

It follows that the 22-step transition matrix is:

Ergodic Markov Chains

If Markov chain is a periodic and positive recurrent is known as ergodic. Ergodic Markov chains are, processes with the better behavior.

Markov chains to predict market trends

Markov chains and their respective diagrams can be used to model the probabilities of certain financial market climates and thus predicting the likelihood of future market conditions [7]. These conditions, also known as trends, are:

- Bull markets: periods of time where prices generally are rising, due to the actors

having optimistic hopes of the future.

- Bear markets: periods of time where prices generally are declining, due to the actors

having a pessimistic view of the future.

- Stagnant markets: periods of time where the market is characterized by neither a

decline nor rise in general prices.

Examples in:

Normas para Autores: Como Publicar en Nuestro Blog

Una guia para colaboradores interesados en publicar articulos en el blog de TodoEconometria. Cubre los temas de interes, el formato requerido y el proceso de revision.

Identificación metodológica de la forma funcional de los modelos de investigación en análisis de datos

Al iniciar una nueva investigación científica surge la necesidad de identificar los componentes que responderán a la pregunta que se desea resolver. Este artículo describe cómo identificar las variables en un modelo estadístico matemático.

¿Te gustó este contenido?

Obtén certificados verificables en Python, Data Science y Machine Learning.

Ver Certificaciones Disponibles →